HPC center

Parallel scaling

SCM cooperates with most major hardware vendors to optimize performance of the Amsterdam Modeling Suite (AMS) for all popular computer platforms. This includes fine-tuning of the code for different compilers and hardware configurations. The SCM team works hard to improve performance including optimal scaling on the latest HPC platforms.

Our standard binaries work well on typical platforms (see also hardware FAQ and summary of a 2020 interactive Q&A on hardware), including large-scale clusters with fast interconnects. Although our software is already highly optimized, we are happy to work with HPC system administrators to build AMS from sources on their systems to further tweak performance or port to non-standard systems.

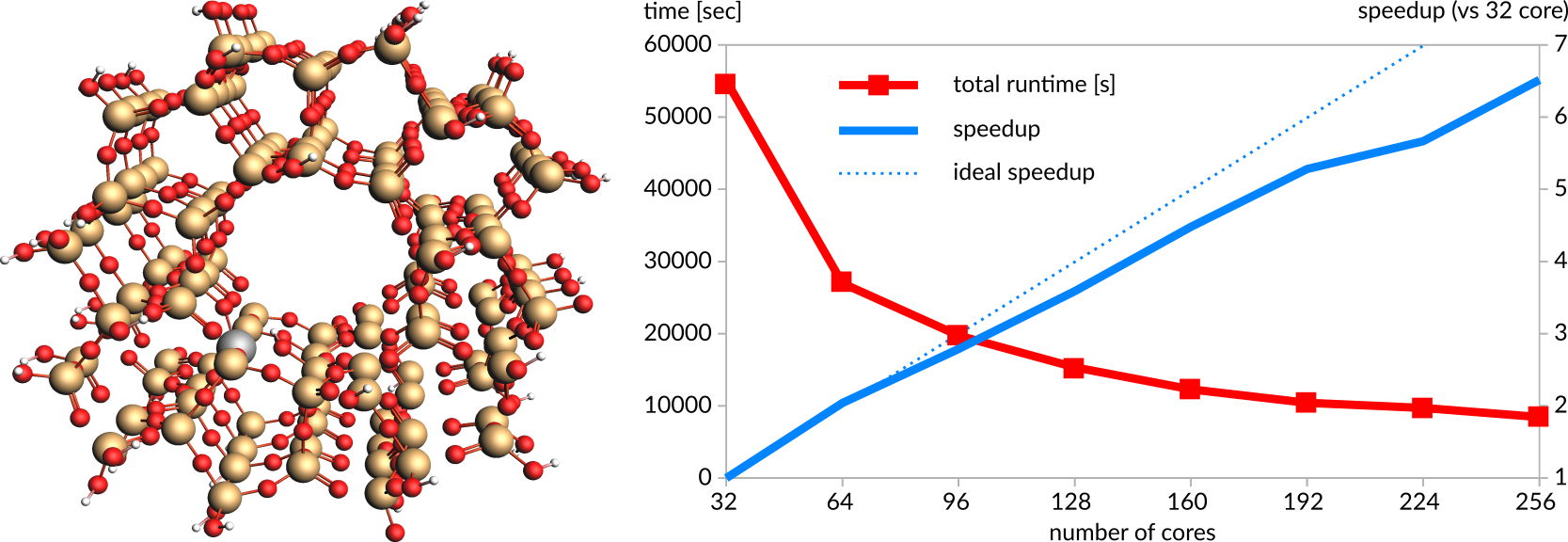

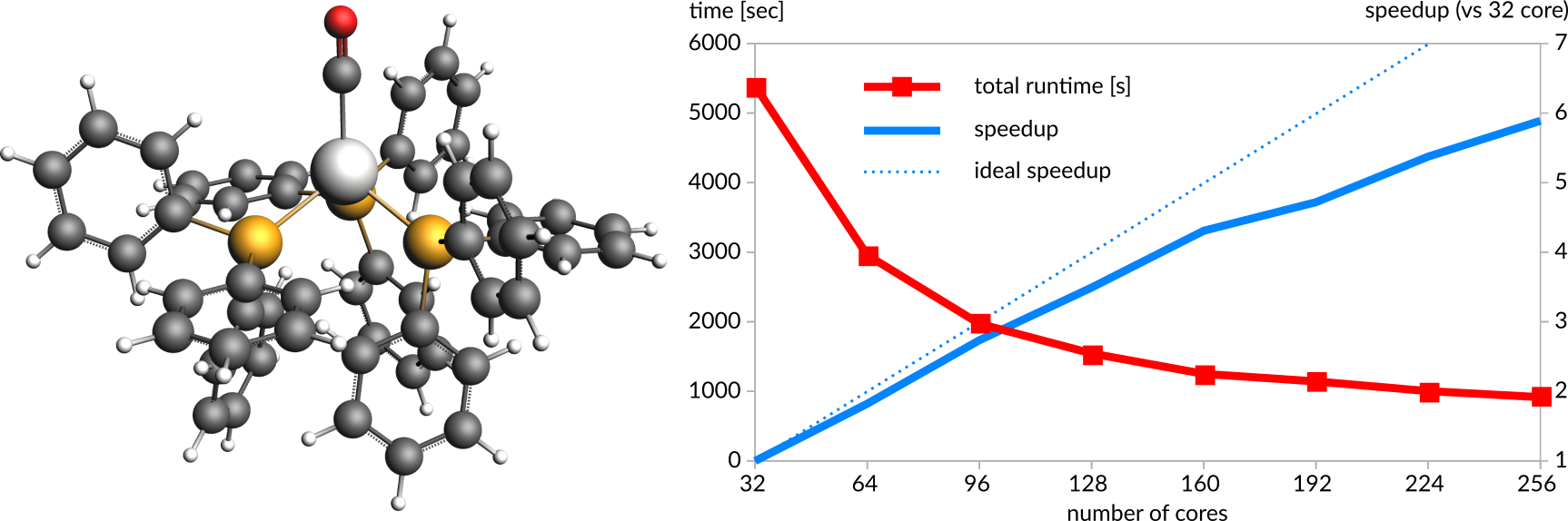

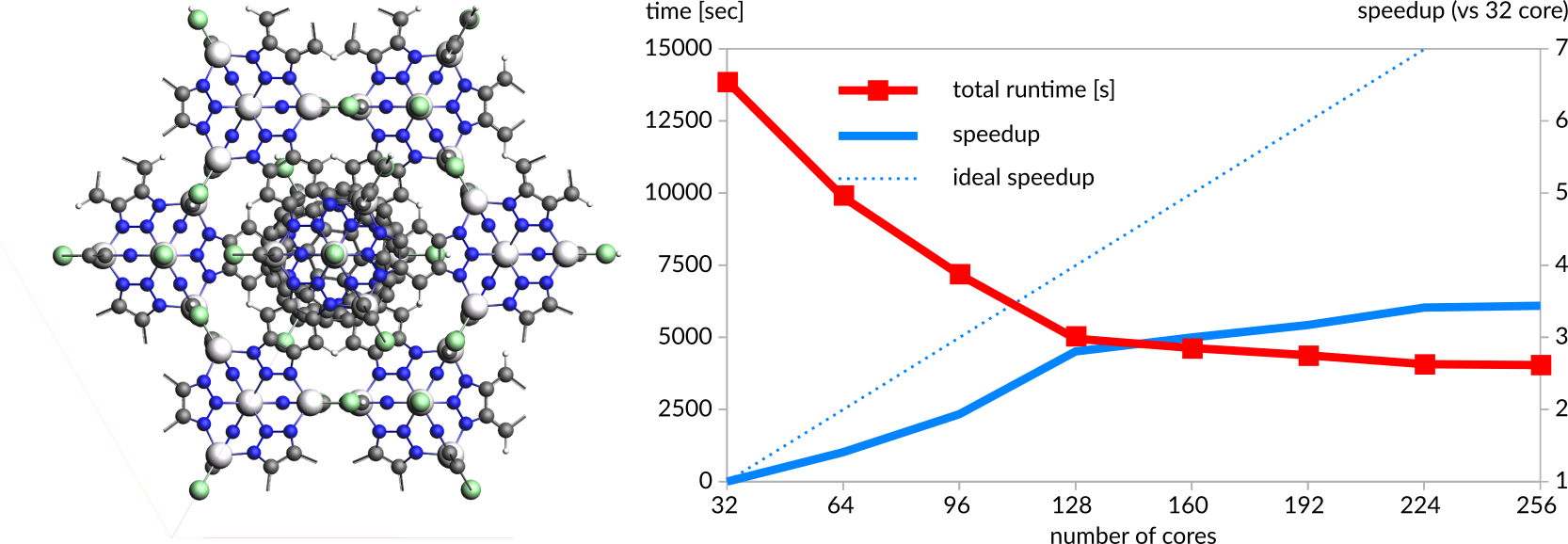

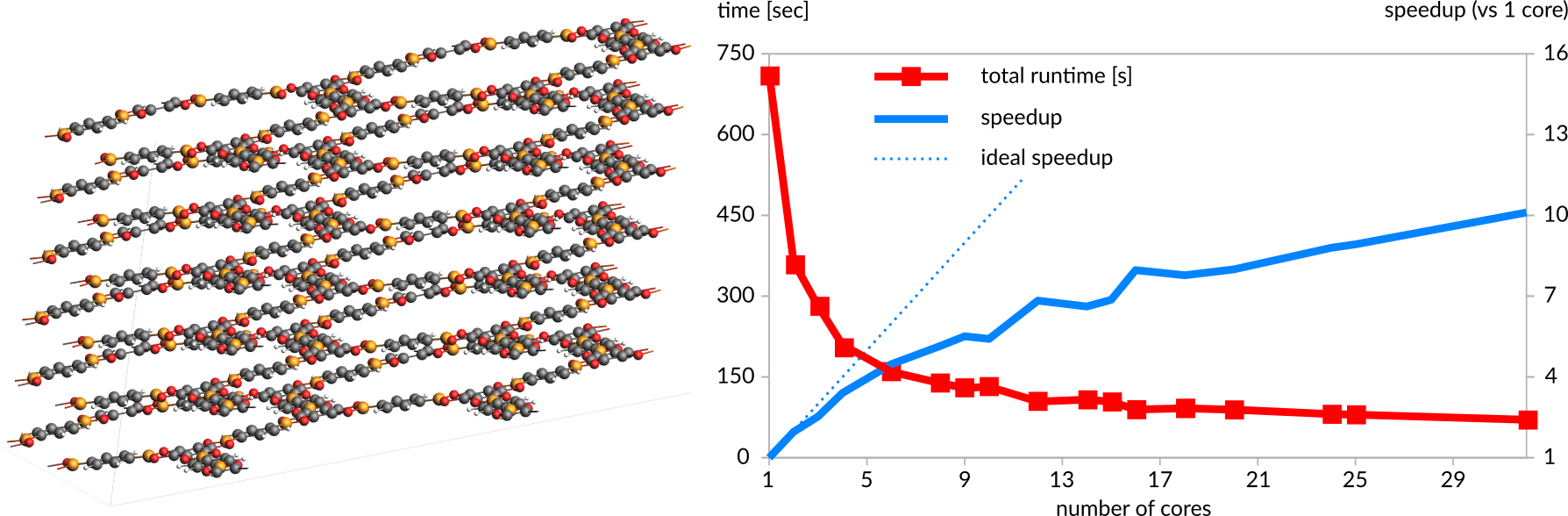

Below are some sample parallel scaling examples on 32-cores Intel(R) Xeon(R) Gold 6130 CPU @ 2.10GHz nodes with 192GB RAM.

M06-L meta-GGA force calculation (geometry step) of a Si127AlO293H75 Zeolite cluster model with a TZP basis set (496 atoms, 9473 STO basis functions) with ADF

BP86 GGA force calculation (geometry step) of a PtC55H45OP3 complex with a TZ2P basis set (105 atoms, 1051 STO basis functions) with ADF

Periodic PBE GGA force calculation (geometry step) of a Zn40C204H48Cl32N144 MOF crystal with an embedded Buckyball molecule with a TZ2P basis set with Γ-point sampling (468 atoms, 12624 STO basis functions) with BAND

Scaling SCC-DFTB (matsci-0-3 parameters) single point calculation of a six-layer COF C720H288B144O288 (1440 atoms), within a single 32-core node

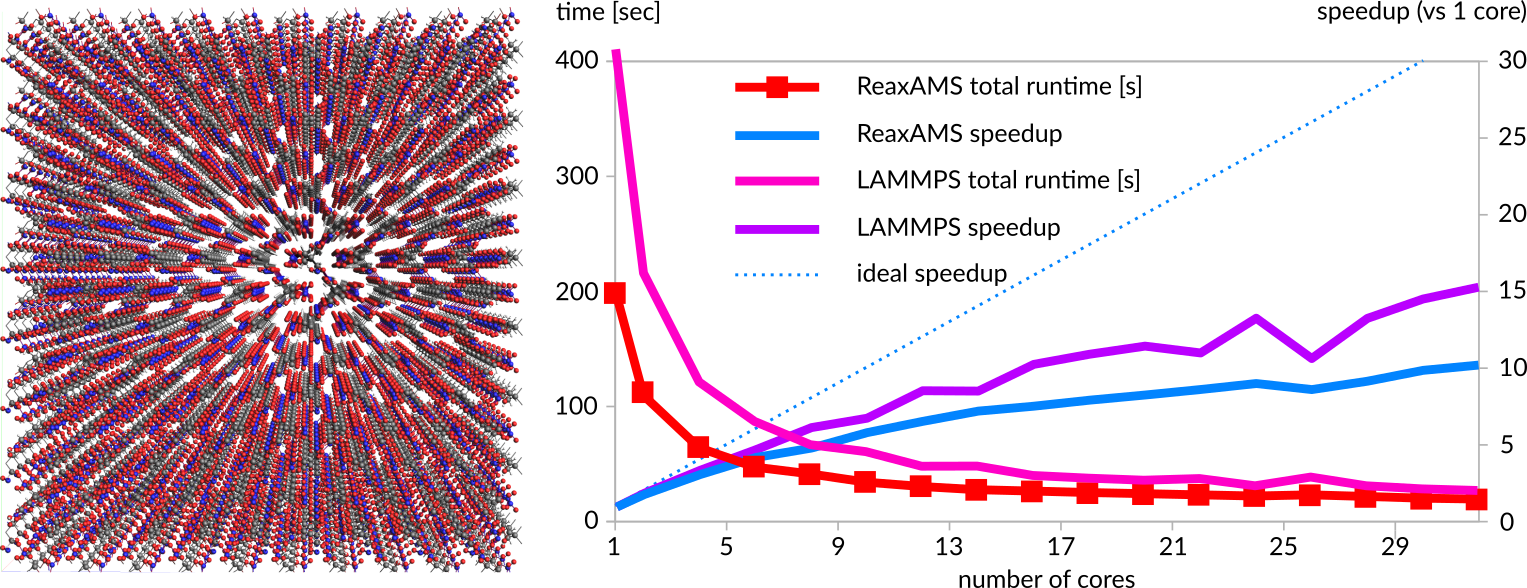

ReaxAMS 10 fs molecular dynamics simulation (100 steps) of a C5600H8960N4480O13440 PETN supercell (32480 atoms) and comparison with LAMMPS

Most of our software modules (ADF, BAND, DFTB, ReaxFF) have been efficiently parallelized for both shared-memory and distributed memory systems, such as multi-core multi-CPU machines and various Linux clusters. For many standard calculations, including NMR, analytical Hessians, and TDDFT calculations, ADF scales well up to hundreds of CPUs.

If you are interested in trying out parallelization yourself, request a trial and indicate how many CPUs you want to test. For supercomputer administrators and application scientists, please e-mail us if you want to know more about specialized builds for your particular architecture. We also have HPC benchmark input files for ADF, for DFTB and for ReaxFF (based on the standard PETN benchmark from LAMMPS).

Previous parallel benchmarks for ADF

Hewlett-Packard and SCM have run a large ADF TDDFT benchmark in 2006, followed up by a geometry optimization benchmark in 2009. The calculations scaled well up to 128 cores, as summarized in the white papers linked above. A large amount of memory per core and Infiniband interconnect is recommended for optimum performance.

Our Japanese reseller Ryoka, in collaboration with the Japan Association for Chemical Innovation (JACI), has run extensive parallelization tests on the TSUBAME2.0 supercomputer, where speed-ups of > 1.8 are achieved up to 96 processors. Doubling the number processors after that still speeds up by 1.6 and 1.4 for using 192 and 384 processors.